The Atlantic - Mind vs. Machine

The Atlantic - Mind vs. Machine

Interesting article about the Loebner Prize, a Turing Test competition. The author tried his best to be named “the Most Human Human” by increasing his interactivity: it’s not about saying one or two interesting things, it’s about creating a thread of conversation that’s logical and reactive to the interlocutor.

For instance, Richard Wallace, the three-time Most Human Computer winner, recounts an “AI urban legend” in which

a famous natural language researcher was embarrassed … when it became apparent to his audience of Texas bankers that the robot was consistently responding to the next question he was about to ask … [His] demonstration of natural language understanding … was in reality nothing but a simple script.

The moral of the story: no demonstration is ever sufficient. Only interaction will do. In the 1997 contest, one judge gets taken for a ride by Catherine, waxing political and really engaging in the topical conversation “she” has been programmed to lead about the Clintons and Whitewater. In fact, everything is going swimmingly until the very end, when the judge signs off:

…

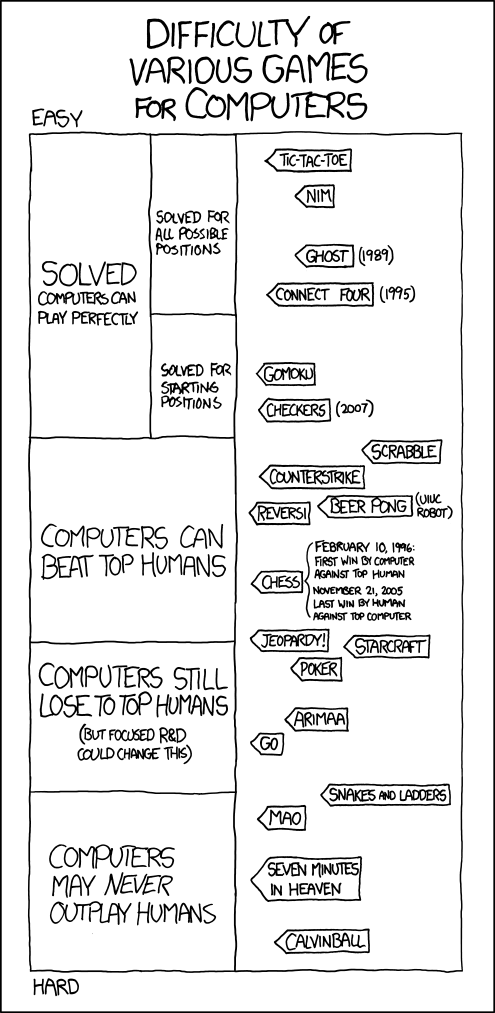

We so often think of intelligence, of AI, in terms of sophistication, or complexity of behavior. But in so many cases, it’s impossible to say much with certainty about the program itself, because any number of different pieces of software—of wildly varying levels of “intelligence”—could have produced that behavior.

No, I think sophistication, complexity of behavior, is not it at all. For instance, you can’t judge the intelligence of an orator by the eloquence of his prepared remarks; you must wait until the Q&A and see how he fields questions. The computation theorist Hava Siegelmann once described intelligence as “a kind of sensitivity to things.” These Turing Test programs that hold forth may produce interesting output, but they’re rigid and inflexible. They are, in other words, insensitive—occasionally fascinating talkers that cannot listen.

I’m working on revising an old paper of mine about Daoism and computers, and one concept I’m trying to suss out is “appropriateness.” Reason lets us do what’s appropriate for the moment by taking everything into consideration, not just what a narrow algorithm specifies.